Split testing is a crucial element in guaranteeing a successful online business.

When engaging in Facebook advertising, email marketing, a sales funnel, or building an authority site, implementing the right way to split test will increase conversions exponentially.

If three hours of work increased your sales 2x, 4x, 10x, or 40x, how much additional revenue will you earn from three months of traffic in that campaign?

“Successful A/B testing can turn your campaign from losing money to break even, break even to profitable, and continually increase your profit margins. ”

When I found the one word in my landing page that increased my conversion rate from 25% to 48% that was a nearly 100% increase in leads with the exact same traffic.

Do more with less by split testing the right way.

Get this wrong and you will literally be shooting yourself in the foot with each split test.

Get this right and you will be able to make consistent improvements on your conversion rates all across your funnel, thus earning more money with the same amount of traffic!

On your path to success growing your own online business, you will learn (if you aren't sold on it already) that split testing is key.

Specifically, split testing your opt in pages, headlines, offers, etc. is the key way for you to constantly improve your results.

The simplest form of split testing is what we call A/B tests. You change one variable on a page and then you measure how many people make it through your call to action to the next page.

You only change one variable so you can have clean data that will identify whether that one change created an improvement in your conversion rate, or not.

If you change two variables in one test, you will never know which variable actually gave you the increase or decrease in conversions… But this isn’t about split test best practices, it’s about the biggest mistake most people make when split testing.

For this to make sense, you need to understand the concept of “statistical significance”

Even writing that makes me have flashbacks of high school and college that I’m not totally enjoying… LOL

But this concept is paramount to your success as an Internet marketer…

Statistical Significance

All you really need to know about statistical significance is that the larger the sample size, the more reliable the data and results. Inversely, the smaller the sample size… The more unreliable the result.

In short, rookies often declare a winner before having statistically significant data… This is the #1 problem in split testing!

Basing decisions on bad data will destroy your funnel conversions!

Already, were in too deep of theory here… That's a lot of words about some complex and vitally important ideas.

To make sure this is crystal-clear for you… You will see a real world split test example from my site next!

You will see the split test set up and the data from 2 separate time periods that will show you through graphs exactly what statistical significance means… And it will show you why you need to base your decisions on statistically significant data!

Because you need to know what to look for in your split test dashboard to make the right decisions!

Ready?

Great… Let’s get to it!

Real World Split Test Example

First, the test…

For me, on this site, my most important conversion point is the opt in for my email list. This is at milesbeckler.com/free-course

Within this page, I’m testing a new headline to see if I can increase conversions through the wording on the page.

The old headline, known as my control, stated: “FREE COURSE: Get My Simple 7 Step Blueprint That Has Made More Than $1,000,000 Online…”

The new headline I’m testing, my variant, states: “Free Course Reveals My 7 Step Blueprint That Took Me From Side Hustle to Million Dollar Business”

Super simple split test, right?

There are really only a couple of things changed in the headline… Taking the “reveal” approach to the beginning and trying to meet the reader where there at (side hustle) and help them understand where the course will take them (million-dollar business).

So this was my hypothesis… That the new “path revealed”version would out perform the past version I was running.

I set up a new split test with Dave’s help from MyFunnelDev.com… I just emailed him the new headline and he got it all put into place for me!

Split Test Results

Let's get into the data so we can get back to the whole statistical significance idea!

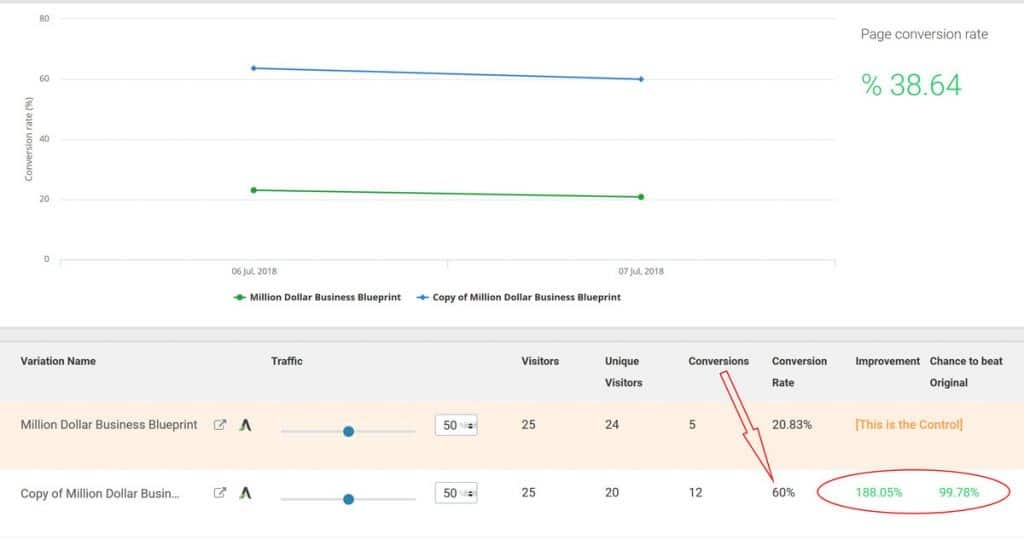

By the 2nd day with 50 visitors through the test, the results looked amazing!

It looked like a dramatic victory was at hand from the new test variation. and here is the trap that most new marketers fall into (that you are learning how to avoid, right now)!

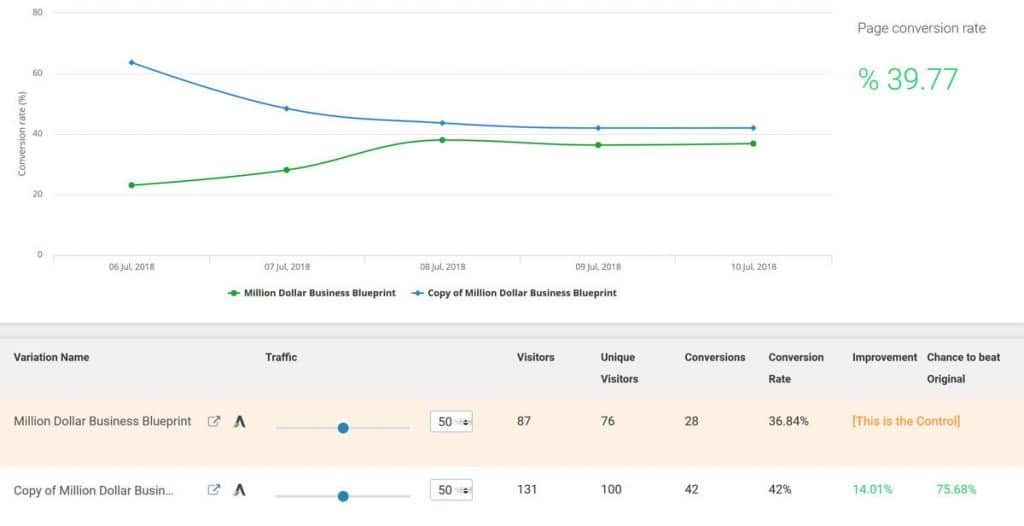

Take a look at the split test data, below:

Out of the gates, the new variation was converting at 60% and the old variation was only converting at 20%… That is nearly a 200% increase in conversions. According to the split testing tool I use there was a 99.78% chance that this new variation would be the original.

I went to a couple of “statistical significance calculators” and entered in the data based on the number of visits and conversions… And they said “your test is statistically significant you can count on these results”

But I didn’t buy it…

With only 50 visitors split between 2 variations… 25 visitors each and less than 20 conversions total, I knew from experience that the future may bring a very different result than what I’m seeing initially.

It is impossible to have statistically significant data with such a small sample size!

So I waited patiently…

Hoping that my astounding results continued, but more importantly… Focused on allowing the data to accrue in large enough numbers that I could truly count on the results.

Today, 5 days after starting the test, I took a look back in on the data I’ve now had over 200 visitors through the test and over 70 conversions… About 3 times the data.

What is the result today?

Well… The results are much closer.

The new variation is still winning, but it is only out pulling the control by a measly 14%. Also, the “chance to beat original” has dropped from 99% confidence to only 75% confidence!

The most important key to split testing & “Statistical Significance” lies in this graph above.

Notice how the gap between them has narrowed significantly as the number of visitors and conversions continues to increase. This is completely normal in the world of split testing.

A rookie conversion optimization student will often times make the mistake of turning off the split test after seeing the 1st days results.

All of the tools said it was statistically significant… It was outperforming by almost 200%… How could that new variation not be the winner?

Well… This is why the biggest mistake that most people make in split testing is turning off their tests to early!

At this point, I’m still not done with my test… I felt it painted such a clear picture to help you deeply understand what statistical significance means that it was time to write this blog post.

I like to see a minimum of 7 days on a single split test. This allows me to balance out the differences in traffic between the weekends and the weekdays. Honestly, I prefer to week tests… 2 Saturdays, 2 Wednesdays, etc.

I also like to see a total of 500 conversions or a minimum of 1000 visits.

At this rate, it will take approximately 20 days of running this test for me to get the kind of data I am confident I can rely on.

One other quick note here… This little headline tweak may not have been the best example of a split test to start with. I’m literally testing a new tool and a new team, so I whipped up something quick to just get the ball rolling.

Jay Abraham has a quote… “Test screams not whispers”

What he means by that quote is to be sure you’re focusing your testing on the big things… A totally different offer, for example. A completely revolutionary headline, for example.

I’ve already run several big tests on what I’m giving away as my opt-in.

Last year, my best giveaway was my free Facebook ads case study “FREE CASE STUDY: How I Generated 13,943 Leads & 188 Customers For $888.84 With Facebook Pay Per Click Advertising”

But this new course has been out pulling that Facebook funnel for many months now.

So, all in all… I’ve been split testing screams and an occasional whisper.

But that’s really not the point here.

The goal has been to help you understand how deceptive the statistics can be when you 1st launch a new split test.

You now understand that patients and discipline with your split testing is key to avoid the biggest mistake of turning your split tests off too soon.

Waiting to see 500 conversions, 1000 clicks, or 14 days of data are all good targets for you to shoot for with your data, before making any decisions about which split test variation wins.

A/B Testing Examples

Testing a headline only – the copy, images, offer, page layout, backgrounds, everything else being the same except the two headlines you are split testing.

Testing your offer only -the headline, copy, images, page layout, backgrounds, everything else being the same except the offer.

Testing the efficacy of a layout – the copy, images, offer, headlines, everything else being the same except the page layout differences you are ab testing.

Focus your efforts on one item at a time, that’s it.

That’s why it’s referred to as A/B testing: one element being tested to eliminate the guesswork of what will increase your conversions.

Data-driven decisions, instead of our intuition.

Split Testing Priorities for Maximum Results

There’s a widely used example of button color as a split test.

For example, on one page you’ll have a pink button and on another, a green one.

This is not a great test, because the color of the button will not have a large enough psychological effect on your user to push their action in any measurable direction. But it is a clear example.

So for button color, just make sure your button has the same color throughout your funnel, synchronicity in your funnel is paramount as discussed in being consistent in your sales funnel.

Now, a great item to split test is your headline! This is more difficult of an example compared to the simple button color example, but the results you can get by testing headlines can revolutionize your business.

If your headline doesn’t punch the reader in the head and make them enthralled and curious about your offer, then it doesn’t matter if the button color is right or not.

Split testing is practical for the significant elements of your landing page.

Your headline is the number one variable to test. Your offer is a close second.

When testing an offer, you could try giving a choice between a free ebook or a free video to see which format works.

It can be the same content and the same headline and same sales format, just a different medium of conveying your informational product.

Testing format is an apparent differential to see what works for your target audience.

Sales copy is a smaller, yet still, measurable variable to split test. Start with your opt-in pages — you really shouldn’t have much sales copy on them.

A subhead or bullet points, for example. You want to ensure the copy draws the reader to the bottom of the page. If not, you’ve lost them somewhere along the way.

Testing helps you figure out how to keep viewers engaged, model successful copy in your industry if you need help in dialing this in.

Once you’ve run testing on your headline, offer and sales copy, test your layout.

Start with a simple layout and add-on, shift around, or take away little things slowly.

You don’t want an opt-in page filled with distractions and then have to undertake the painstaking process of removing them one by one to see why it isn’t upping your opt-in rate.

A/B Testing Takes Patience

Leaving your split testing samples alone is the best way to gather results.

It can be tough to not look at your numbers for several days.

Split testing on advertising takes less time than A/B web testing: you’ll see a thousand clicks on a Facebook pay per click ad faster than you’ll gather a thousand opt-ins on your squeeze page.

You’ll garner a thousand opt-ins more quickly than a thousand customers buying on your sales page.

Do split tests at every level.

When something works on the ad level, keep it and move on to testing your opt-in page.

Then move on down your funnel.

So if it was a particular headline that outranked all your other ad headlines, test a variation of it on your opt-in page. Then move on to imagery on the ad next time.

Success with Split Testing

If you don’t have a way to perform split testing efficiently, it can be overwhelming.

The most efficient method I have found is the Thrive Optimize plugin by Thrive Themes.

If you are using WordPress, this video will show you how to set up an A/B test using Google Analytics Experiments.

Remember, patience is your biggest asset when performing A/B testing.

You’ll want to view at least a thousand conversions and a minimum of two weeks of data before making any decisions.

Split test at every level of your process and be patient, incremental gains equal substantial shifts for your business.

The results from split testing headlines, offers, and sales copy are nothing short of transformational.